2023, year of open LLMs (huggingface.co)

A very capable chat model built on top of the new Mistral MoE model, trained on the SlimOrca dataset for 1 epoch, using QLoRA.

SD-Turbo is a fast generative text-to-image model that can synthesize photorealistic images from a text prompt in a single network evaluation. We release SD-Turbo as a research artifact, and to study small, distilled text-to-image models. For increased quality and prompt understanding, we recommend SDXL-Turbo....

I’ve been using TheBlokes Q8 of huggingface.co/teknium/OpenHermes-2.5-Mistral-7B, but now this one (huggingface.co/…/OpenHermes-2.5-neural-chat-7b-v3…) I think is killing it. Has anyone else tested it?

Description:...

This new dataset release provides an efficient means of reaching performance on-par with using larger slices of our data, while only including ~500k GPT-4 completions....

https://lemmy.kya.moe/imgproxy?src=lemmy.kya.moe%2fpictrs/image/3fde0c15-ed8e-41ef-9e69-4383b1b7c804.png...

We find GPT-4 judgments correlate strongly with humans, with human agreement with GPT-4 typically similar or higher than inter-human annotator agreement....

A short journey to long-context models....

https://lemmy.kya.moe/pictrs/image/5c507a45-d8af-428e-94e6-30ff8eefeb12.png...

The model uses 200k samples from different datasets to fine-tune Open-Orca/Mistral-7B-OpenOrca....

This model based on Mistral7B-v0.1 and was trained using 1,112,000 dialogs. Maximum length at training was 2048 tokens....

Nous-Capybara-7B...

Model is trained on his own orca style dataset as well as some airoboros apparently to increase creativity...

This release is trained on a curated filtered subset of most of our GPT-4 augmented data....

Context: Falcon is a popular free LLM, this is their biggest model yet and they claim it’s now the best open model in the market right now.

cross-posted from: lemm.ee/post/6916266...

Hugging face transformers officially has support for AutoGPTQ, this is a pretty huge deal and signals a much wider adoption in quantized model support which is great for everyone!

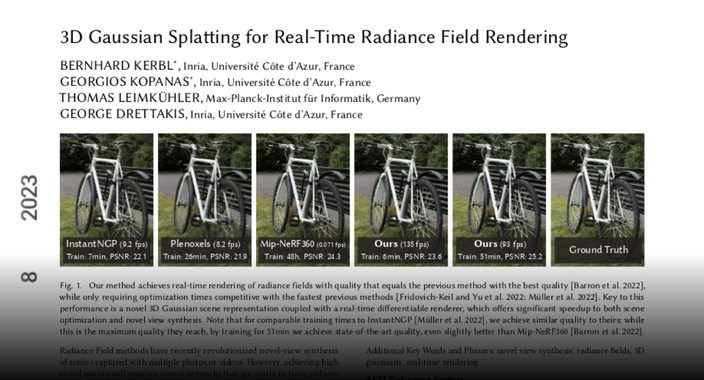

Achieves SOTA on quality AND on training time AND renders in real-time (60fps+)

These are the full weights, the quants are incoming from TheBloke already, will update this post when they’re fully uploaded...

And of course the quants by TheBloke...