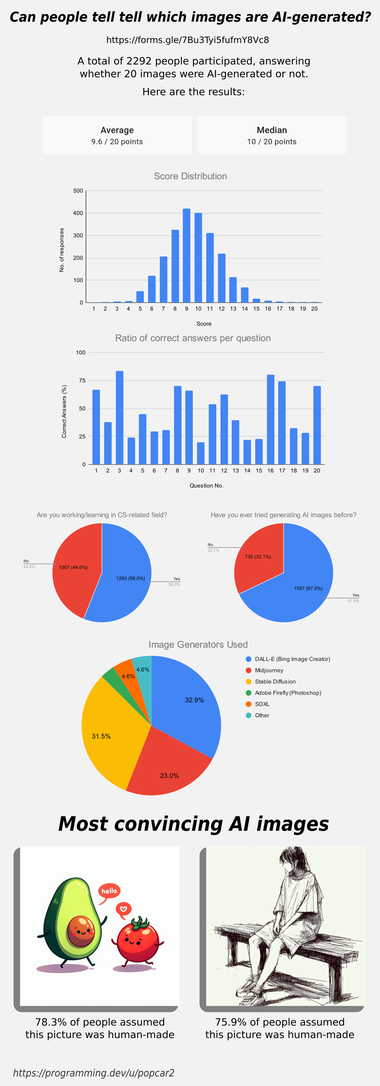

Results of the "Can you tell which images are AI generated?" survey

Previous posts: programming.dev/post/3974121 and programming.dev/post/3974080

Original survey link: forms.gle/7Bu3Tyi5fufmY8Vc8

Thanks for all the answers, here are the results for the survey in case you were wondering how you did!

Edit: People working in CS or a related field have a 9.59 avg score while the people that aren’t have a 9.61 avg.

People that have used AI image generators before got a 9.70 avg, while people that haven’t have a 9.39 avg score.

Edit 2: The data has slightly changed! Over 1,000 people have submitted results since posting this image, check the dataset to see live results. Be aware that many people saw the image and comments before submitting, so they’ve gotten spoiled on some results, which may be leading to a higher average recently: docs.google.com/…/1MkuZG2MiGj-77PGkuCAM3Btb1_Lb4T…

Add comment